MLOps 101: From Notebooks to Production 2026

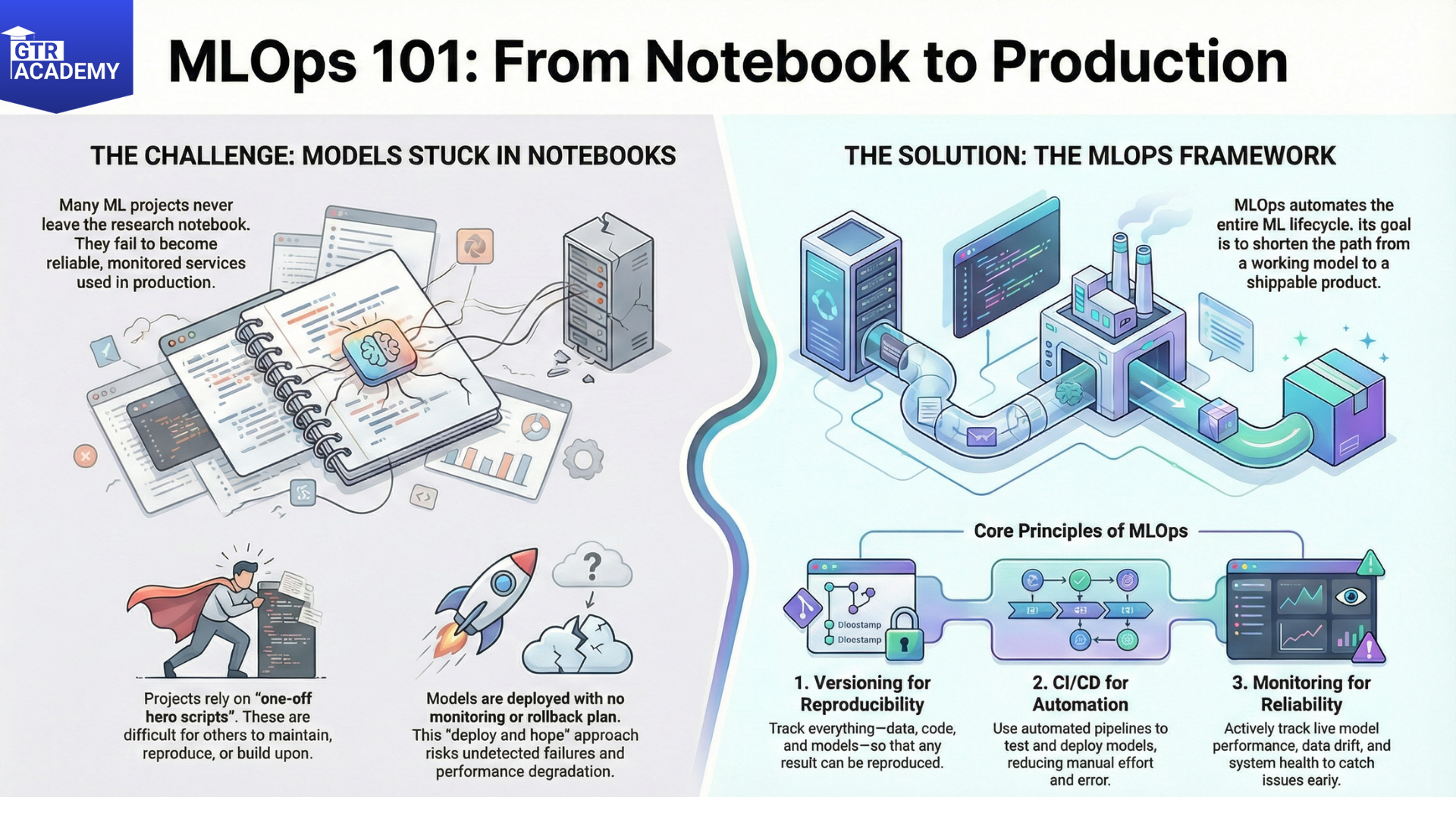

Only a small portion of Machine Learning projects actually progress beyond the development in the Notebooks to Production. ML Ops (Machine Learning Operations) is primarily concerned with the conversion of those promising experiments into dependable, monitored, and continuously running services.

Connect With Us: WhatsApp

What are ML Ops? ML Ops 101: From Notebooks to Production

ML Ops is essentially DevOps but for machine learning production systems. It embraces the entire lifecycle from data and experimentation all the way to deployment and subsequent monitoring:

- Versioning: Data, code, and models are versioned, and reproducible runs are guaranteed.

- CI/CD for ML: Automated testing and deployment pipelines for models and data pipelines.

- Monitoring: Observing performance, drift, latency, and failures of models live.

The purpose is to expedite the journey from I have a model that works in a notebook to We can safely ship and update this model in production.

Key components of an ML Ops pipeline the post can be easily divided into a simple, end to end flow:

- Data pipelines: Data ingestion, cleaning, and feature creation can be done via orchestration tools (such as Airflow, Prefect).

- Experiment tracking: Software that logs parameters, metrics, and artifacts of different runs so the team can compare and reproduce experiments.

- Model packaging and deployment: Models are containerized (for instance, Docker) and then deployed as batch jobs, APIs, or streaming components.

- Monitoring and alerting: Keeping an eye on both technical metrics (latency, errors) and business metrics (accuracy, conversion lift, drift indicators).

Nowadays, many cloud platforms provide fully integrated ML Ops services, but the core principles are universal regardless of the technology stack. Common pitfalls and better habits.

- Lack of environment parity: models perform differently between dev, staging, and prod.

- No monitoring or rollback plan: you release once and cross your fingers.

- Ask people to think about doing a small, simple task in production: putting a single model into production using the minimum but real ML Ops (basic CI, environment parity, simple monitoring) before trying to build a platform suitable for a big corporation.

- The following post will be a light ML Ops example taking a notebook model, wrapping it in an API, and adding simple monitoring so that you can understand how the various parts connect and work in tandem.